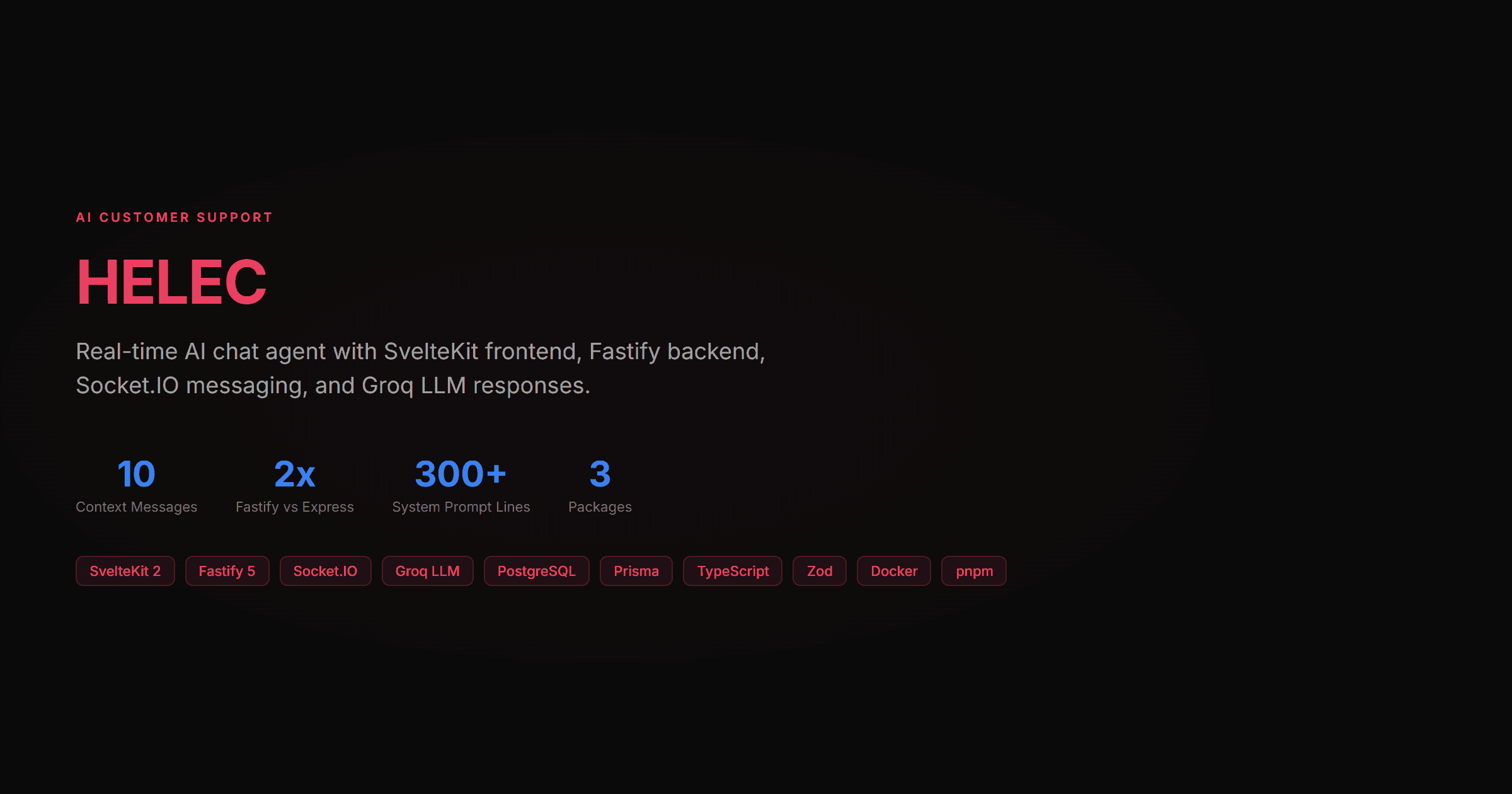

HELEC (Helpful E-commerce Live Engine for Customers) is a real-time AI-powered customer support system I built as a pnpm monorepo with shared TypeScript types across frontend and backend. I chose SvelteKit over React for the frontend because Svelte's reactive declarations and built-in stores handle WebSocket state far more cleanly than React's useEffect-based approach.

The frontend is a floating chat widget (SvelteKit 2 with Svelte 5, Tailwind CSS, DaisyUI) that sits in the bottom-right corner of any e-commerce store. Users click the bubble, type a question, and get AI-powered responses in real time. The widget handles session persistence through localStorage, shows typing indicators, auto-scrolls to the latest message, and supports conversation resets.

The backend runs Fastify 5, which benchmarks at roughly 2x the throughput of Express. Socket.IO handles bidirectional real-time communication. When a user sends a message, the server creates the conversation (if new), loads the last 10 messages from PostgreSQL for context, sends the conversation history to Groq's API, and streams the AI response back through the WebSocket.

I chose Groq (llama-3.3-70b-versatile) over OpenAI for inference speed. Groq runs on custom LPU hardware and returns responses roughly 10x faster than GPT-4. The system prompt is 300+ lines covering products, shipping policies, return procedures, payment options, and product recommendations specific to the e-commerce store.

The database schema is minimal: Conversation and Message models in Prisma with cascade deletes. PostgreSQL 16 runs in Docker Compose for local development. Input validation uses Zod for every endpoint, and UUID validation catches malformed conversation IDs before they hit the database.

REST endpoints handle conversation retrieval and health checks, while Socket.IO events handle the real-time message flow: join_conversation, send_message, typing indicators, and error propagation.

Key Features

- Floating chat widget with typing indicators and auto-scroll

- Groq LLM (llama-3.3-70b) with 10-message context window

- Socket.IO real-time bidirectional messaging

- pnpm monorepo with shared TypeScript types

- Fastify 5 backend (2x Express throughput)

- PostgreSQL conversation persistence with cascade deletes

- Zod input validation on all endpoints

- Docker Compose for local PostgreSQL

- 300+ line system prompt for domain-specific responses

Tech Stack

Next project

CyberShip Carrier Service